CIS 601: Special Topics in Computer Architecture: GPGPU Programming Spring 2017

Course Information

instructor: Joe Devietti

when: Tuesday/Thursday 10:30-noon

where: Towne 321

contact: piazza, email, canvas

office hours:

- Tuesday 2-3pm, Levine 572

Course Description

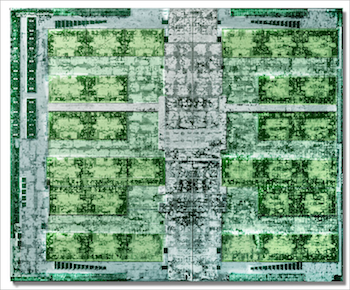

Graphics Processing Units (GPUs) have become extremely popular and are used to accelerate an increasingly diverse set of non-graphics workloads. This seminar will examine modern GPU architectures, the programming models used to write general-purpose code for GPUs, and the complexities of programming such highly parallel architectures. There will be a special emphasis on concurrency correctness issues as they relate to GPUs, including GPU memory consistency models and GPU concurrency bugs. Graduate-level coursework in computer architecture (e.g., CIS 501) will be very helpful.

Course Materials

No textbooks are required; links to all readings will be provided at this website.

Grading

- Project: 40%

- Participation: 20%

- Assignments: 15%

- Future work write-ups: 15%

- Paper questions: 10%

There will be no exams.

Submit homework, paper questions and future-work write-ups via Canvas

The class project can be done alone or in groups of 2. The project is open-ended: it should be something related to GPUs but the specifics are up to you. Choosing a project that incorporates your interests (research or otherwise) is a great idea!

Course Schedule

This schedule is subject to change

| Date | Topic | Presenter |

|---|---|---|

| Thursday 12 Jan | Intro | Joe |

| Tuesday 17 Jan | CUDA Basics | Joe |

| Thursday 19 Jan | GPU Architecture Overview | Joe |

| Tuesday 24 Jan | ” | Joe |

| Thursday 26 Jan | ” | Joe |

| Tuesday 31 Jan | Real-world GPU Architectures | Joe |

| Thursday 2 Feb | CUDA Performance Tuning Nsight video tutorial (memory profiling starts at 41:40), Nsight documentation | Joe |

| Tuesday 7 Feb | No class: Joe traveling | - |

| Thursday 9 Feb | No class: Joe traveling | - |

| Tuesday 14 Feb | CUDA miscellany, profiling | Joe |

| Thursday 16 Feb | CUDA Synchronization | Joe |

| Tuesday 21 Feb | A Primer on Memory Consistency and Cache Coherence, Chapters 3-5 (SC, TSO, RC) | Joe |

| Thursday 23 Feb | No class: Joe traveling | - |

| Tuesday 28 Feb | Heterogeneous-Race-Free Memory Models slides | JJ, Max |

| Thursday 2 Mar | GPU concurrency: Weak Behaviours and Programming Assumptions slides | JJ, Max |

| Tuesday 7 Mar | No class: spring break | - |

| Thursday 9 Mar | No class: spring break | - |

| Tuesday 14 Mar | Debunking the 100X GPU vs. CPU myth: an evaluation of throughput computing on CPU and GPU slides | Omar, Richard |

| Thursday 16 Mar | Rodinia Benchmark Suite slides | Grayson, Shreyas, Akshay |

| Tuesday 21 Mar | MemcachedGPU: Scaling-up Scale-out Key-value Stores | DJ, Hans |

| Thursday 23 Mar | GPUfs slides | Shreyas, Yishuai |

| Tuesday 28 Mar | Dynamic Warp Formation slides | Eric, Grayson, Romita |

| Thursday 30 Mar | Dynamic Warp Subdivision for Integrated Branch and Memory Divergence Tolerance | Kavya, Richard |

| Tuesday 4 Apr | The Dual-Path Execution Model for Efficient GPU Control Flow slides | DJ, Hans, Romita |

| Thursday 6 Apr | Cache-Conscious Wavefront Scheduling | Kavya, Akshay |

| Tuesday 11 Apr | Unifying Primary Cache, Scratch, and Register File Memories in a Throughput Processor | Archana, Shveta |

| Thursday 13 Apr | Cache Coherence for GPU Architectures | Archana, Yishuai |

| Tuesday 18 Apr | Towards high performance paged memory for GPUs | Eric, Omar |

| Thursday 20 Apr | project presentations | - |

| Tuesday 25 Apr | project presentations | - |

Project Ideas

- Investigate time savings from approximate GPU computing. Consider replacing data types with narrower-width versions, e.g., converting 64-bit doubles to 32-bit floats, or 16-bit integers to 8-bit integers. How does this affect running time and accuracy of the computation?

- Investigating scalable locking in CUDA, from simple spin-locks to something like MCS locks. The lack of coherence on GPUs should add an interesting wrinkle. Useful resources are Michael Scott’s webpage and the SSync library from EPFL.

- Port an application of interest to you to CUDA.

- your idea here!