CIS 601: Special Topics in Computer Architecture: GPGPU Programming Spring 2016

Instructor

Office Hours: by appointment in Levine 572

Class discussion and announcements are via Piazza

When & Where

Tuesday/Thursday 10:30-12:00noon, Towne 321

Course Description

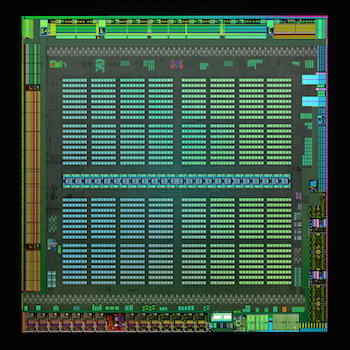

Graphics Processing Units (GPUs) have become extremely popular and are used to accelerate an increasingly diverse set of non-graphics workloads. This seminar will examine modern GPU architectures, the programming models used to write general-purpose code for GPUs, and the complexities of programming such highly parallel architectures. There will be a special emphasis on concurrency correctness issues as they relate to GPUs, including GPU memory consistency models and GPU concurrency bugs. Graduate-level coursework in computer architecture (e.g., CIS 501) will be very helpful.

Course Materials

No textbooks are required; links to all readings will be provided at this website.

Grading

- Project: 40%

- Participation: 20%

- Assignments: 15%

- Future work write-ups: 15%

- Reading quizzes: 10%

There will be no exams.

Submit homework, reading quizzes and future-work write-ups via Canvas

The class project can be done in groups of up to 2. The project is open-ended: it should be something related to GPUs but the specifics are up to you. Choosing a project that incorporates your interests (research or otherwise) is a great idea!

Schedule

This schedule is subject to change

Many of the paper links below are to publisher sites (like the ACM Digital Library). You’ll need to download the papers from an on-campus computer or via the UPenn Library proxy

Project Ideas

- Investigate time savings from approximate GPU computing. Consider replacing data types with narrower-width versions, e.g., converting 64-bit doubles to 32-bit floats, or 16-bit integers to 8-bit integers. How does this affect running time and accuracy of the computation?

- Investigating CUDA Memcheck, a tool for detecting errors in CUDA programs. What is its performance overhead like? What kinds of bugs does it catch, and what kinds does it miss?

- Investigating scalable locking in CUDA, from simple spin-locks to something like MCS locks. The lack of coherence on GPUs should add an interesting wrinkle. Useful resources are Michael Scott’s webpage and the SSync library from EPFL.

- Port an application of interest to you to CUDA.

- your idea here!